“I am working on a simulation study that requires me to generate data for individuals within clusters, but each individual will have repeated measures (say baseline and two follow-ups). I’m new to simstudy and have been going through the examples in R this afternoon, but I wondered if this was possible in the package, and if so whether you could offer any tips to get me started with how I would do this?”

This question popped up in my in-box a couple of days ago. And since I always like an excuse to do a little coding early in the morning to get my brain going, I decided to create a little example, though in this case, there were at least two ways to go about it. I sent back both options, and am putting them up here, since I know this kind of data generation problem comes up frequently. In fact, the post I recently wrote on open cohorts in stepped-wedge designs had to deal with this same issue, though in a slightly more elaborate way.

Three-level hierarchical data

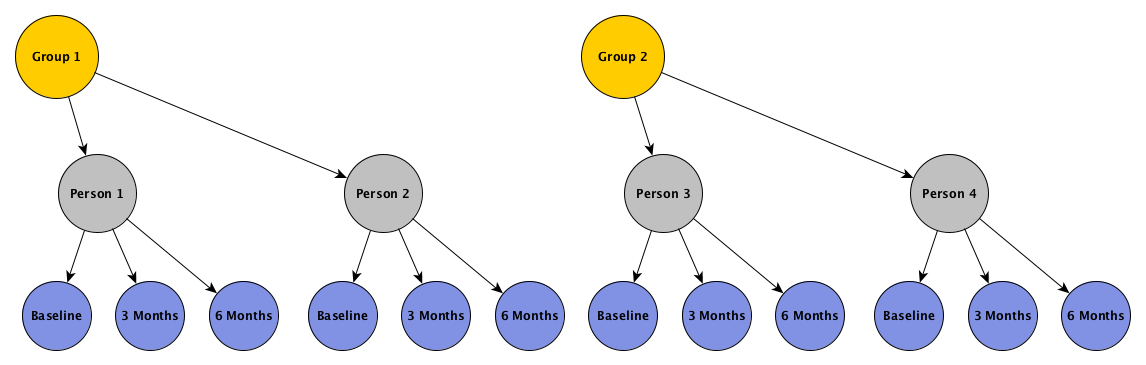

In this example, we want individuals clustered within groups, and measurements clustered within individual, as depicted by this figure:

The hierarchical scheme represented implies that outcomes for individuals within groups are correlated, and that measurements over time for a particular individual are correlated. The structure of these two levels of correlation can take on a variety of forms. In the examples that follow, I am going to assume that the correlation between the individuals in a group is constant, as are the individual measurements over time. We could easily make the assumption that measurements closer in time will be more highly correlated than measurements further apart in time (such as auto-regressive correlation with 1 period of lag), but since we have only three measurements, it is not totally unreasonable to assume constant correlation.

Generating data explicitly with random effects

Enough with the preliminaries - let’s get to the data generation. In the first approach, both levels of correlation will be induced with group- and individual-level random effects using the following underlying model:

where is the outcome for person in group during time period . is the mean outcome during period , . is the group-specific effect, and . is the individual-specific effect, and . Finally, is the noise for each particular measurement, where .

The group, individual, and outcome definitions are the first order of business. In this example, , , and . In addition, the average outcomes at baseline, 3 months and 6 months, are 3, 4, and 6, respectively:

library(simstudy)

### Group defintion

defg <- defData(varname = "gamma", formula=0, variance = 2, id = "cid")

### Individal definition

defi <- defDataAdd(varname = "alpha", formula = 0, variance = 1.3)

### Outcome definition

defC <- defCondition(condition = "period == 0",

formula = "3 + gamma + alpha",

dist = "nonrandom")

defC <- defCondition(defC, condition = "period == 1",

formula = "4 + gamma + alpha",

dist = "nonrandom")

defC <- defCondition(defC, condition = "period == 2",

formula = "6 + gamma + alpha",

dist = "nonrandom")

defy <- defDataAdd(varname = "y", formula = "mu", variance = 1.1)To generate the data, first we create the group level records, then the individual level records, and finally the repeated measurements for each individual:

set.seed(3483)

dgrp1 <- genData(100, defg)

dind1 <- genCluster(dgrp1, "cid", numIndsVar = 20, level1ID = "id")

dind1 <- addColumns(defi, dind1)

dper1 <- addPeriods(dind1, nPeriods = 3, idvars = "id")

dper1 <- addCondition(defC, dper1, newvar = "mu")

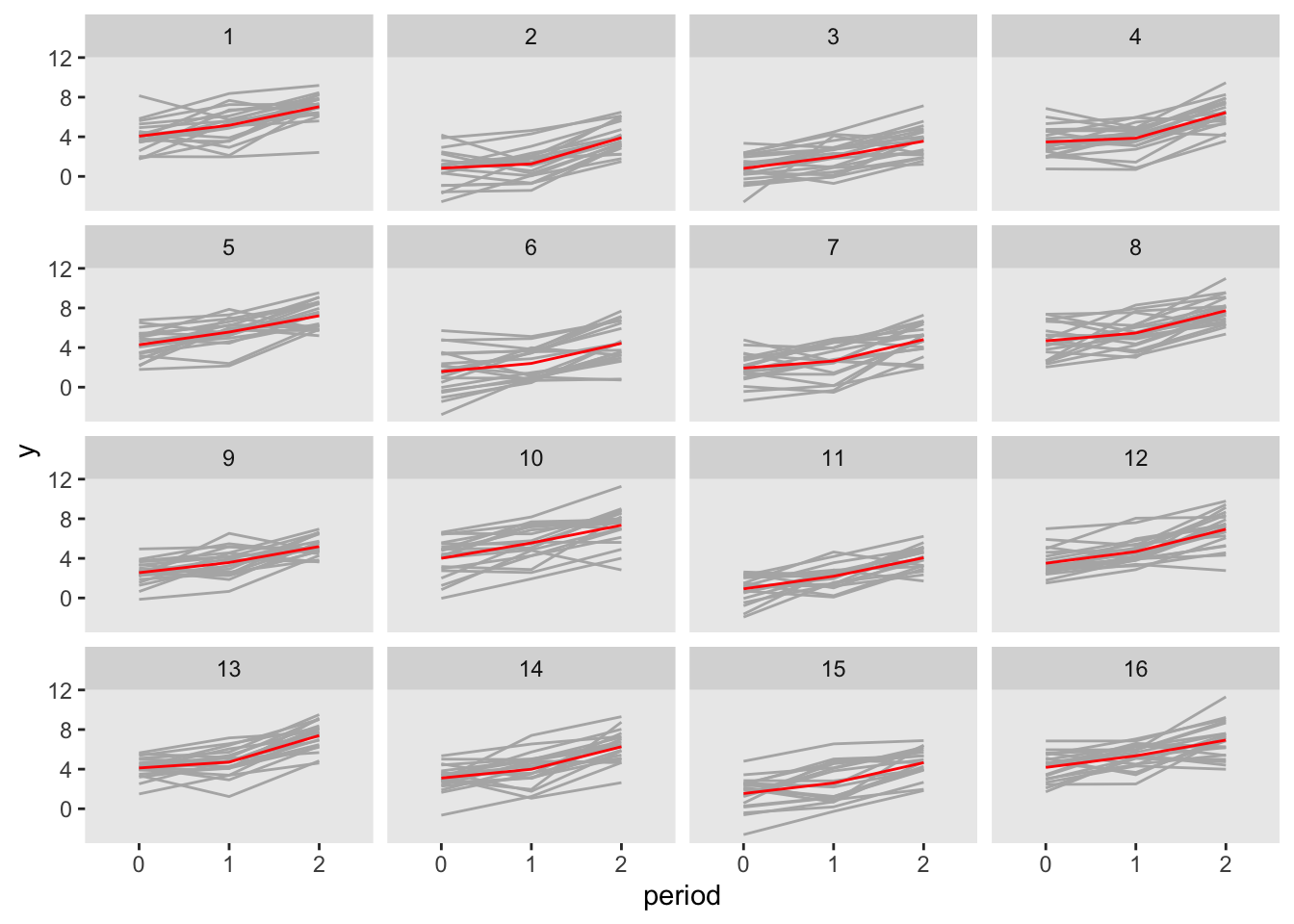

dper1 <- addColumns(defy, dper1)Here is a plot of the outcome data by period, with the grey lines representing individuals, and the red lines representing the group averages:

Here is a calculation of the observed covariance matrix. The total variance for each outcome should be close to , and the observed covariance should be close to

dcor1 <- dcast(dper1, id + cid ~ period, value.var = "y")

setnames(dcor1, c("id", "cid", "y0", "y1", "y2"))

dcor1[, cov(cbind(y0, y1, y2))]## y0 y1 y2

## y0 4.5 3.2 3.4

## y1 3.2 4.2 3.2

## y2 3.4 3.2 4.6The correlation show be close to

(For a more elaborate derivation of correlation coefficients, see this post on stepped-wedge designs.)

dcor1[, cor(cbind(y0, y1, y2))]## y0 y1 y2

## y0 1.00 0.73 0.75

## y1 0.73 1.00 0.73

## y2 0.75 0.73 1.00